It's not just Disney that has tiny robots capable of performing complex movements. Google DeepMind recently published a paper to discuss how deep reinforcement learning can be used to train low-cost miniature humanoid robots so they can learn complex movement skills. The London-headquartered research lab was renamed to Google DeepMind as part of a recent restructuring, where the Google Brain team was combined with the DeepMind team.

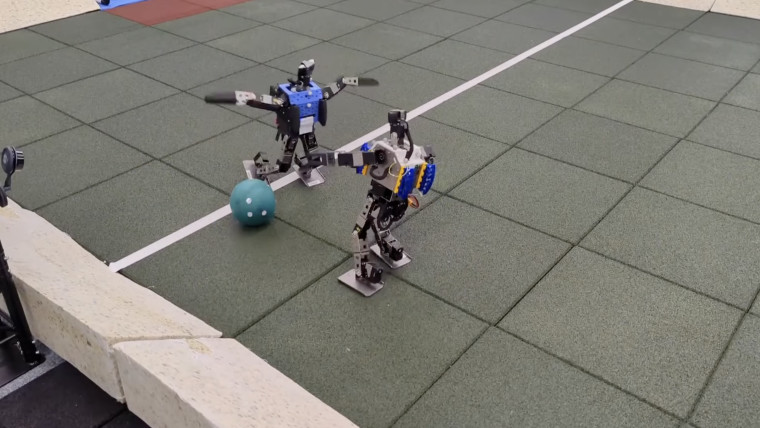

The researchers trained small humanoid robots to play simplified one-vs-one soccer matches in a 5m x 4m fixed-sized court. For that purpose, they used the Robotis OP3 platform which has 20 controllable joints and various sensors. Their goal was to see if the robots can learn "dynamic movement skills such as rapid fall recovery, walking, turning, kicking, and more." The robots were first taught individual skills separately in simulation which were then merged end-to-end in a self-play setting.

In one of the videos created by researchers, a robot tasked with scoring a goal is pushed multiple times to see its "robustness to pushes." The robot falls to the ground and then gets back up on its own to score a goal.

Researchers added that the robot players can automatically transition between these skills "in a smooth, stable, and efficient manner - well beyond what is intuitively expected from the robot." The robots also learned to anticipate ball movements and block opponent shots by developing a basic strategic understanding of the game. While their primary job was to score goals, experiments showed that they walked 156% faster, took 63% less time to get up, and kicked 24% faster than a scripted baseline.

Source: DeepMind Via Ars Technica

4 Comments - Add comment